Why is evaluation important for SRGBV?

Evaluations can help programmers and policy-makers to determine whether SRGBV interventions have had an impact, but also whether the intervention is relevant, efficient, effective and sustainable (OECD DAC, 1991). Process evaluations can also ensure that the programme is on track and recommend whether it needs to be adjusted and how.

There are several different types of evaluation, depending on the intervention being evaluated, the purpose of the evaluation, as well as the context and resources available. Evaluations can be formative (taking place before or during a project’s implementation, with the intention of improving the design and performance) or summative (drawing learnings from a completed intervention that is no longer implemented). The main types of evaluation and their use for SRGBV programming are shown below.

|

Summary of evaluation types and use for SRGBV programming |

||

|

Evaluation type |

Description |

Why use in SRGBV programming? |

|

Design or ex-ante evaluation |

Takes place at SRGBV programme design stage. Supports definition of realistic programme objectives, validates the cost-effectiveness and the potential of the programme to be evaluated |

Given the complex context that SRGBV programmes operate in, this evaluation type supports the design process and can ensure realistic goal and target setting in the programme, including at impact level |

|

Process evaluation |

Assesses programme implementation and policy delivery |

Helps to find answers as to ‘how’ and ‘why’ an SRGBV programme is or is not working |

|

Output-to-purpose or mid-term evaluation |

Evaluation that takes place halfway through the programme to assess the extent that delivered outputs contribute to achieving outcome-level results |

Allows for reflection at mid-term and enables course correction, if necessary. Good for transferring learning into action, but less useful for a (rather late) validation of programme design and better suited for improvement during the implementation phase |

|

Outcome evaluation |

Focus on short- and medium-term outcomes like changes in knowledge, attitudes and behaviour towards SRGBV at the end of a programme |

Good option in case quick decision-making is important, for example, for extending an SRGBV programme or shaping SRGBV policies. Useful for understanding change processes at the outcome level of the logframe, but normally does not explicitly focus on impact |

|

Impact evaluation |

Assesses the changes that can be attributed to a particular intervention, such as project, programme or policy. Impact evaluations involve counterfactual analysis – i.e. comparing what actually happened and what would have happened in the absence of the intervention |

Typically targets long-term impacts, like changes in SRGBV prevalence rates or social norms, but in practice often includes medium-term outcomes, especially as long-term outcomes are difficult to assess even three to five years after a programme intervention |

|

Adapted from DFID (2012) |

||

To date, most studies and evaluations of SRGBV interventions have been small-scale, qualitative and have focused on the findings being used to appraise the intervention itself, rather than informing broader policy and scale-up. In part, this is due to the time and cost involved in conducting rigorous impact evaluations and given the additional methodological, safety and ethical measures required to research SRGBV (see section on Methodological, ethical and safety recommendations).

The country example below provides an example of impact evaluation of interventions aimed at reducing SRGBV.

|

Country example – Evaluating the impact of the Good School Toolkit, Uganda The Good School Study used several evaluation methods to assess the impact of the Good School Toolkit: a cluster-randomized controlled trial (RCT), a qualitative study, an economic evaluation and a process evaluation. The Good School Toolkit, developed by Raising Voices, aims to prevent violence against children in schools and to improve the quality of education. The toolkit seeks to get the entire school and surrounding community involved in the process of transforming the school into a child-friendly, non-violent and participatory learning environment. To measure the impact of the Good School Toolkit, researchers from the London School of Hygiene and Tropical Medicine, in partnership with Raising Voices, conducted an RCT in 42 primary schools in Luwero District, Uganda, from January 2012 to September 2014. The study aimed to determine whether the toolkit could reduce physical violence from school staff to students. Key elements of the RCT design included:

The study found that the toolkit reduced physical violence from school staff by 42 per cent – an impressive change over a relatively short (18-month) timescale. Students in the intervention schools reported improved feelings of well-being and safety at school. Nevertheless, levels of school violence remain high in both the control and intervention schools. Source: Devries et al (2015) |

|

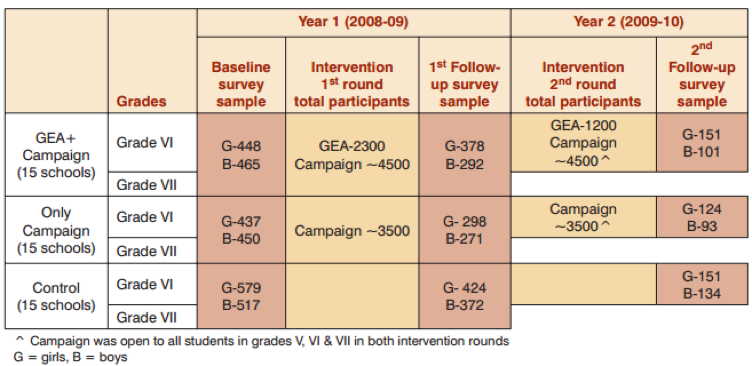

Example: Evaluating the impact of the Gender Equity Movement in Schools (GEMS) curriculum, India GEMS is a school-based intervention for children aged 12–14 years old. It uses participatory methodologies such as role plays, games, debates and discusses teaching children how to fight gender inequality and violence. GEMS was developed by the International Center for Research on Women (ICRW), in partnership with the Committee of Resource Organizations for Literacy (CORO) and the Tata Institute for Social Sciences (TISS). A quasi-experimental study was conducted to assess the GEMS programme outcomes in a randomly selected sample of 45 schools in Mumbai public schools. The schools were randomly and equally distributed across three arms, with 15 schools in each arm: (1) One control arm; (2) One intervention arm with the school-based campaign and the group education activities (GEA+); and (3) One intervention arm with only the school-based campaign (‘Only’). A total of 2,035 students (1,100 girls and 935 boys) across the three arms completed self-administered surveys covering three broad areas: gender roles, violence and SRH. A small sample of students also participated in in-depth interviews.

Key findings included:

The GEMS approach is now being scaled up to 250 schools in Mumbai, following the success of the first pilot programme. It is also being rolled out in 20 schools in Viet Nam. Source: ICRW (2011) |

|

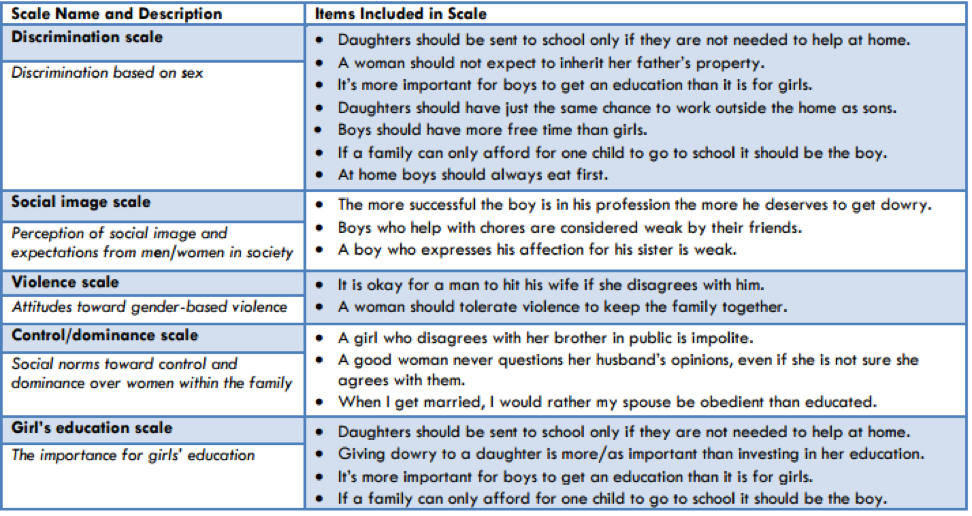

Example: Using participatory methods to evaluating the impact of the Choices curriculum, Nepal Participatory methodologies were used as part of a pre-post, quasi-experimental evaluation of the piloting of the Choices curriculum in the Siraha district in the Terai region of Nepal. The curriculum consists of eight developmentally appropriate activities, supporting young adolescents between the ages of 10 and 14 to explore alternative views of masculinities and femininities. The aim of the programme was to contribute to better sexual and reproductive health outcomes for participants and their communities in the future by changing attitudes about gender norms. The evaluation of the pilot found significant changes in children’s gendered attitudes and behaviour relating to gender discrimination, social image, control and dominance, violence, attitudes to girls’ education and acceptance of traditional gender norms, before and after participating in Choices. The study found that the curriculum helped the students recognize that sexual harassment and teasing of boys who do not conform to dominant gender norms is not appropriate. Boys expressed more appreciation for the work their sisters did and reported helping more with domestic tasks. Girls reported speaking out more in class and talking to their parents about delaying marriage. Two examples of participatory methods used in this evaluation include: Gender Attitudes Card Game/Pile Sort: The aim of the card game was to sort gender value statements (see box below) on colour-coded cards into agree/disagree piles. Each of the statements was grouped around five categories: discrimination, social image, violence, control/dominance and girls’ education. The young people were asked to read the statement on each card (or for low-literate participants, the statement was read to them), and then place the card into a container marked ‘agree’, ‘disagree’ or ‘strongly agree’. The evaluation found that prior to the Choices curriculum, 40 per cent of participants believed “it was okay for a man to hit his wife”, which dropped to 10 per cent in the post-intervention evaluation.

‘Arun’s dilemma’: This storytelling method aims to assess acceptance of traditional and non-traditional gender roles. The fictional character ‘Arun’ wants to help his sister with her household chores, but is afraid of what his parents and friends might say about him doing ‘female’ activities. After listening to Arun’s dilemma, children were asked if they had any advice for Arun or his sister. They were also asked whether they agreed or disagreed with various gender role statements such as: “Boys who help with household chores are considered weak by their friends”. Pre-intervention, most children said that Arun should listen to his parents and not be more helpful to his sister. However, post-intervention, the majority of the experimental group who had been through the Choices curriculum advised Arun to help his sister; these findings were statistically significant. Sources: The Institute for Reproductive Health (2011); Lundgren et al (2013) |